You might trust a computer with your finances, but would you trust it with your personal safety? What about your public image? Or your career?

These were the debates rattling through a client’s boardroom before consulting firm Generation R Consulting was commissioned to develop artificial intelligence (AI) ethics guidelines for new software.

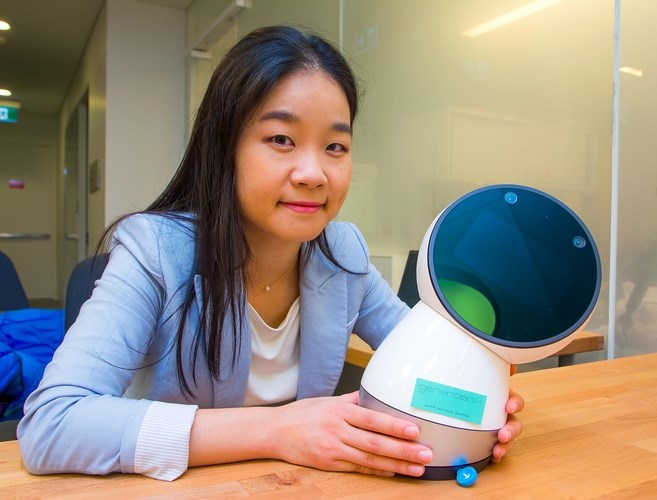

“Because the word AI is there and machine learning is there, there is a lot of fear associated with that,” said Generation R CEO AJung Moon, a roboticist by trade who also serves as director of the Open Roboethics Institute.

The consultancy based at the University of British Columbia bills the AI ethics road map it developed for Technical Safety BC as a first of its kind, identifying ethical risks associated with its clients’ AI-powered software and recommending ways to mitigate those risks.

Technical Safety BC, formerly the BC Safety Authority, an independent organization overseeing the installation and operation of technical systems and equipment in the province, has been developing software for an AI-powered decision support system to more accurately predict technical safety hazards.

“The core notion in the whole field of AI is, how do we design these systems to be fair and transparent and accountable?” Moon said.

Generation R interviewed all the stakeholder groups that would be affected by the technology, and hashed out issues of transparency, trust, public perception and the role of Technical Safety BC’s safety officers.

Part of the goal was to determine how this AI-powered software would affect a safety officer’s job over five to 10 years and gauge how the workers would feel about their own sense of independence as a result of the technology.

In all, Generation R’s AI ethics road map provided 31 points of consideration for its client and five practical recommendations.

“What they’re doing is absolutely necessary,” said engineer Justin Long, head of Canadian business development at Skymind’s Vancouver office.

His San Francisco-based company specializes in deep learning, a type of machine learning that uses extensive amounts of data to make automated decisions about other data. Clients include the Boeing Co. (NYSE:BA) and French telecom giant Orange S.A., and Skymind is now turning its attention to Canadian mining companies that require AI expertise.

“It’s going to be very rare to find a company that is [solely] an artificial intelligence company because most companies develop under a market need.”

Long added that businesses need certified accountants to monitor their finances so it makes sense that they would need consultants to provide expertise on AI-powered software.

Before joining Skymind, Long co-founded Bernie A.I., incorporating artificial intelligence in an online dating assistant that could study a user’s writing habits and online behaviour.

It used that information to judge users’ attractiveness and then strike up conversations with people on dating sites by mimicking a user’s writing style.

“At Bernie, there were definitely ethical questions,” Long said. “We tried to take as much care as possible with the small company that we had to say, ‘OK, how do we design this so that we make it good for people, we make it fun for people, we make it so that we’re actually solving a problem?’”

Long said the solution was to veer away from making a generalized assistant and instead train an individual AI-powered assistant for each user.

But Long said these ethical considerations are not a priority for all companies using AI-powered software.

“More and more companies are going to have to [develop ethics guidelines] because it’s going to affect how their businesses operate,” said Robert Clapperton, an assistant professor specializing in data analytics at Ryerson University’s School of Professional Communication.

But he said it might prove challenging to introduce a regulatory framework for companies using AI technology in Canada.

Meanwhile, Moon said one of the reasons her firm is developing the AI ethics road map is to make up for the gaps and grey zones that exist in legislation.

.jpg;w=120;h=80;mode=crop)